Ever wanted to read thousands of tech blogs? Now you can!

Date:

If you just want to see the site I made, feel free to scroll to the summary at the bottom. Otherwise, keep reading!

A few days ago, I saw an interesting thread on Hacker News: "Ask HN: Could you share your personal blog here?"

Being a part of the software development industry, it is definitely helpful to stay informed and sometimes just hear about interesting things that others are doing. Hacker News is also one of the more informative communities and has remained such over the years. Thus, it was no surprise that people started responding soon after:

However, with almost 2000 responses, that's a bit too much content to wrap one's head around. How would you ever know which blog to pick, where to start? Thankfully, someone else made another post with the fruits of their labor not soon after: "Show HN: OPML list of Hacker News Users Personal Blogs"

What they had done, is create a list of RSS feeds and Atom feeds that the linked blogs provide. While I wasn't familiar with the OPML format myself, that's essentially just XML which lets us aggregate all of the data in a single file, for our convenience. In other words, that's an automation goldmine:

So, I decided to brush up on my Python skills and make something useful...

Making something useful

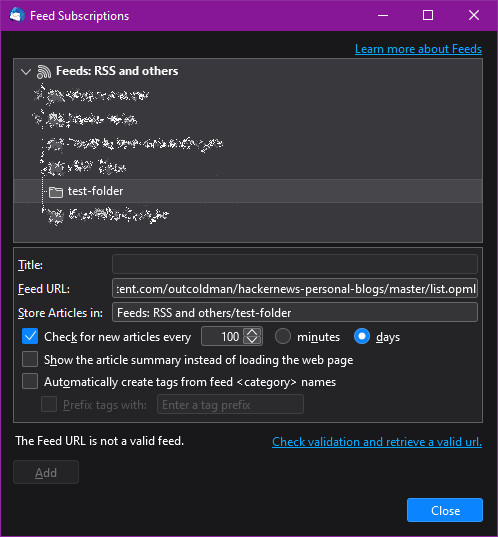

The idea is that I should be able to import the OPML file into my feed reader and call it a day, right? Well, unfortunately, Thunderbird doesn't want to play ball with us:

This could be explained by the fact that Thunderbird is primarily a mail client and its feed reading functionality is somewhat rudimentary, but even if we could import all of the feeds, there's still the problem of there being thousands upon thousands of posts in them, which could feasibly kill many of the RSS readers out there. Also, some of the feeds are RSS, some are Atom or even other formats, which might present a bit of a challenge to the reader software. However, since all of the data is available to us, that just seemed like an engineering problem: a problem that's waiting to be solved!

My plan was rather simple:

- Download the OPML list of all the feeds from GitHub

- Parse all of the links and download the feeds (RSS/Atom/...) from all of the blogs

- Combine them into feeds for each year, based on the date of posts

- Render some HTML for a website, so that people can browse the data and find the feeds easily

Here's a quick diagram, that turned out simpler than reality (as most things do):

I understood that there'd be lots of data to deal with, therefore something like static rendering is probably the right way to go about things. Because of that, I essentially had to build a static site generator: something that will output HTML and other files to be served by a web server directly (these files on disk can also be cached in memory by the OS), to not run into performance issues and not have to deal with great hardware requirements.

Because of this, I went with Python, since the actual static content generation speed isn't paramount, yet Python has some great libraries that can help us:

- feedparser - lets us download and parse feeds of various types: RSS 1.0, RSS 2.0, Atom 1.0, JSON feeds and many others

- rfeed - lets us write our own RSS 2.0 format feeds to XML files, to export prepared data

However, there's a saying that was rather apt once I actually started working:

No Plan Survives First Contact With the Enemy

So, what went wrong?

The challenges along the way

In a few words, I underestimated the amount of data that I'd have to deal with: there were over 1200 individual feeds, totaling around 350 MB of XML data. I'm not sure whether I could quite call it "Big Data" and put it on my CV just yet, but it was definitely something that took some effort to deal with:

You see, money doesn't grow on trees and all I could afford for this project was a server with 4 GB of RAM - while quite powerful on its own, processing all of the data en masse (e.g. sorting it, parsing dates and so on) with Python in particular can bring us a bit closer to that limit, especially when we consider that we also need to run a web server on the same box.

Aside from that, there's also the issue of data quality: since I'm dealing with over 20 years of data, it's quite likely that date formats, character encodings and other data will be all over the place! If you look at some of the oldest posts I ended up extracting, if the timestamps are to be believed, there's even one that's older than me:

If we want to have a quick look at the many different date formats, we can also do that across all of the files a bit like the following:

grep --max-count 1 --no-filename '<pubDate>' ./* > examples.txt

grep --max-count 1 --no-filename '<published>' ./* >> examples.txtWe get all sorts of dates as a consequence of that, for example:

Thu, 29 Jun 2023 22:16:24 +0000

Sun, 21 May 23 00:00:00 Z

Wed, 05 Jul 2023 23:32:24 GMT

2023-07-07T13:31:23+03:00

2023-06-20T14:00Z

2023-04-19

While there's nothing wrong with the formats themselves, parsing them will still require a bit of attention. Thankfully, the aforementioned feedparser library makes this easier for us, at least when the dates are present and when the data isn't malformed in some other way. Speaking of malformed data, we also need to be prepared to have invalid characters in the feeds that we get, because all it takes is for one blog to have faulty data, to ruin all of them for us:

(this is before I split up the feeds by year, because most folks won't want to work with 20-100 MB large feed files)

If we go to that particular place in the file, we see the following:

Imagine some blog post from 2009 ruining your ability to interact with thousands of others due to an invalid character. If we check what sort of a character it is, it appears that it's actually a control sequence, one that probably has nothing to do in a blog post on the Internet:

While I could have just removed those particular bad characters, the fact of the matter is that we need to plan for similar occurrences in the future that are hard to foresee. Because of that, the approach I chose was to dump my prepared XML and then read it back in with the etree.XMLParser functionality, attempting to recover it along the way (as described here):

def clean_xml(feed_contents):

my_parser = etree.XMLParser(recover=True)

xml = etree.fromstring(feed_contents.encode(), parser=my_parser)

cleaned_xml_string = etree.tostring(xml, encoding='unicode')

return cleaned_xml_stringBut even with that and some formatting out of the way, there was still the issue of performance - reaching out to over 1200 different blogs on the Internet is unlikely to be fast, if you try to do that sequentially. Because of that, I needed the equivalent of items.for_parallel, which Python doesn't do as easily as something like Java lets you (parallel streams are nice), yet with a bit of work we can still achieve what we need:

parallel_threads = 10

thread_pool = ThreadPool(parallel_threads)

thread_pool.map(download_feed, download_tuples)

thread_pool.close()

thread_pool.join()The only weirdness I encountered was the fact that the .map method seems to take a method as an argument (the task to be executed), but doesn't support multiple parameters. Ergo, we needed to pass in a bunch of tuples, something that most people who write Go will also be familiar with:

return (feed_xml_url, local_feed_xml_filename, local_download_directory)But the challenges don't quite end there. I needed a lot of testing to get to a point where I'm satisfied with the application:

Testing means re-generating lots of data, again and again. Clearly, we shouldn't re-download multiple hundreds of MB for each run, nor do we want to go through all of the transformations supported. Because of that, I wrote the logic in stages, with one type of transformation per file:

Then the main Python file just needs to glue these bits together, like so:

def main():

opml_from_github.get()

all_rss_feeds.get()

combined_rss_feed.make()

rss_feeds_and_html.create()What's more, we can allow each of those files to be executed individually, by adding the following:

if __name__ == '__main__':

os.chdir('..') # If we run stuff in logic directly, we need to make the working directory be one level up

get()(example of opml_from_github.py)

PyCharm even makes this easy with a helpful context action, which will create a run profile in the IDE:

Other tools could definitely take notice of this feature, especially when wanting to run individual test cases, too!

The end result

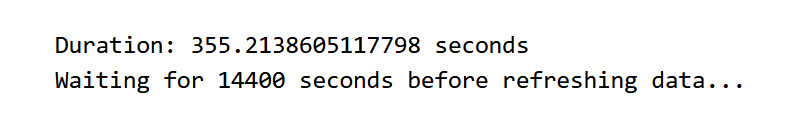

There's a bit more to the whole process, which kept me busy for most of yesterday and this morning, but all of it was necessary just so I could see the following in the logs:

So what happens periodically is that I delete the previously downloaded files, download everything again and parse, combine and sort all of the feeds. Then I can replace any old files, if present, in a directory that's available to a web server, so that the new ones can be served. Thus, the performance is as good as it can be (I still use some compression to be respectful of others bandwidth) because of static files, while the actual code that generates the data can be written in a simpler language like Python, even if its runtime performance isn't amazing.

Even the end result is as simple as it gets, just a bunch of files, with generated hyperlinks between then, you don't even need JS enabled to view all of the content. If only things could be this simple more often:

But what's the benefit to you, the reader?

Summary

It's a site that you can view yourself, here: Hacker News Personal Blogs

Perhaps it's not the most aesthetically pleasing site in the whole world, but I'm actually proud of how it turned out. You can choose to browse either the blogs by top 100 users (less data), or all of the blogs for a given year (still shouldn't be too bad, except on mobile data perhaps):

As a matter of fact, you can even view a list of all the blogs, not just the posts, which are ordered by the karma that the corresponding Hacker News users have, with the most popular ones first:

The actual sites are also relatively lightweight, even the largest page with all of the blog posts for a given year, that has around 6400 posts in total, is less than your typical news site. I contemplated adding paging, but I don't think that most browsers will struggle too much with the amount of DOM elements present:

Because of my work on getting RSS feeds working, you can even import it in your feed reader of choice and consume content that way, instead of using a browser:

You get formatting and pictures where available, too! All in a reasonably formatted RSS 2.0 format, without odd control characters.

Now, I haven't personally found a standalone feed reader that I enjoy using: managing subscriptions is a bit weird in Thunderbird, you delete one and then it tells you that you can't re-add it in another folder because you're already subscribed... But hopefully this is useful for someone regardless, and the server manages to stay up for a while! At least everything's running in containers with resource limits, so restarts should happen automatically, if needed.

I also posted on HN about this project, but it doesn't seem like it got any attention. Oh well, that's life.

Other posts: « Next Previous »