manavakcina.lv and apturicovid.lv

Date:

Having participated in the creation of https://links.kronis.dev/WhQA0TQKxm (well, actually all of what you see on the site was written by me, including the APIs that fetch the data and the private CMS, excluding the text contents and illustration pictures), the homepage for the Apturi Covid app and now seeing that someone is attempting to create https://links.kronis.dev/5dob2Nvzt6, i figured that i could compare how their site fares against mine.

Surely, the actual workloads are pretty similar, aren't they? A website that needs to handle moderately high loads, like ~C10K, and do some light data processing along with it. My site primarily provided the users with information about the app, as well as served as a place to familiarize oneself with the FAQ, the privacy policy and the terms of use, maintain the media materials for download, as well as forward the users to the download pages for the apps, all served in multiple languages and with the ability to customize the content. Their site primarily needs to provide the information about the ability to vaccinate, integrate with external authentication methods (https://links.kronis.dev/3qsU8GYYA5) and allow inputting some simple data that should be persisted. My site is mostly read-heavy (since the data in the CMS and is consumed by a lot of users) whereas theirs also has to deal with some write workloads, but surely that doesn't prevent me from approximately comparing them, right?

apturicovid.lv

The site itself was developed in a bit of a rush and with limited resources, given that it was necessary to inform people about the applications in short notice, as well as because it was an entirely voluntary project by a number of companies, including mine at the time (SIA "Autentica"), which is how i got to participate in the initiative in the first place. It's written primarily with React for the front-end components and some light Node.js for the back-end stuff and was successfully containerized to allow horizontally scaling it. I won't provide much more information about the actual architecture behind it or finer details about the components here, because we're mostly focused on the overall functioning of the page itself:

And, in this particular case, it seems like it works pretty decently! The page generally loads pretty quickly, is under 1 MB in size, thanks to gzipping its contents and code-splitting so that you don't have to load a bloated PDF viewing library that's needed by the terms of service and privacy policy pages, as well as because the images are lazily loaded in many of its parts, so that stuff that's off-screen will only be loaded when it's necessary. That said, it's still heavier than i'd like it to be, mostly because of the images and a whole bunch of Bootstrap/React code to make it more interactive and also support multiple languages (as well as filter which data is or is not available based on the container's environment variables):

In addition, i used some pre-rendering approaches, because while i didn't have the time and resources to use server side rendering to support browsers without JavaScript enable, at least above-the-fold content will be shown even in browsers that don't support JavaScript or choose not to have it enabled. You'll even be able to navigate between the pages and download the PDF files, view the companies that participate in the initiative, however the dynamic content won't work. Personally, i think it's pretty sad that we require JS for pages to work, but then again, we're stuck creating these "interactive" experiences and noone really seems to like SSR or even static pages that much.

In addition, i also have a development environment running on my own servers, which i used to develop new versions of the site and follow the best CI/CD practices, given that the bureaucracy to get everything working on LVRTC infrastructure for testing purposes would have been pretty difficult. Testers actually enjoyed it and since no private/restricted information needed to be published in the system, overall it was a pretty good solution! I just wish that development tended to move more towards the self-service approach, but until then, having a homelab was definitely useful:

As an additional thing to mention, the actual front-end webapp does use a CMS for getting all of the dynamic content that it needs. But just for fun (this will become relevant later), let's suppose that the back-end services are not available for some reason. Actually, let's do just that and misconfigure the URL that the front-end will attempt fetching data from:

environment:

- BASE_URL=https://non-existent-api.covid.kronis.devThe site will not only work, but it will display most of the data that you would normally see, albeit a slightly older version:

Now, normally you would never see this occur, but in the event that it would happen (e.g. someone would mess up the data in the CMS, the nodes running the CMS would go out of order and the container re-scheduling would need a bit of a time, there would be a network outage or other reasons), the site would be mostly operational nonetheless. This was done by baking in the site's resources at build time into the container itself and was initially how it was easily scaled to deal with pretty big loads! Of course, the CMS was added later, but the synergy of those two mechanisms performed pretty well!

So, as a master's degree student in RTU (which i've since completed with a 10/10 grade), i managed to create a somewhat competent page and it worked in production with relatively little issues. That doesn't set the bar awfully high, now does it? I'm sure that this new page, developed by the government would fare much better.

manavakcina.lv, the beginning

Well, not quite.

Essentially they messed up one of the basic rules of software development - always having separate development, test and production environments. So apparently, they initially published the site that let about 700 people register, even though that was just test data and because of it made the app look a bit worse than it otherwise would. Okay, that's not awfully cool, but is still probably not the worst thing in the world. I can't talk about their development practices, but blunders do happen to everyone occasionally (such as using @test.lv for sending test data and getting e-mails from them because someone forgot to use MailCatcher for the environment ¬_¬).

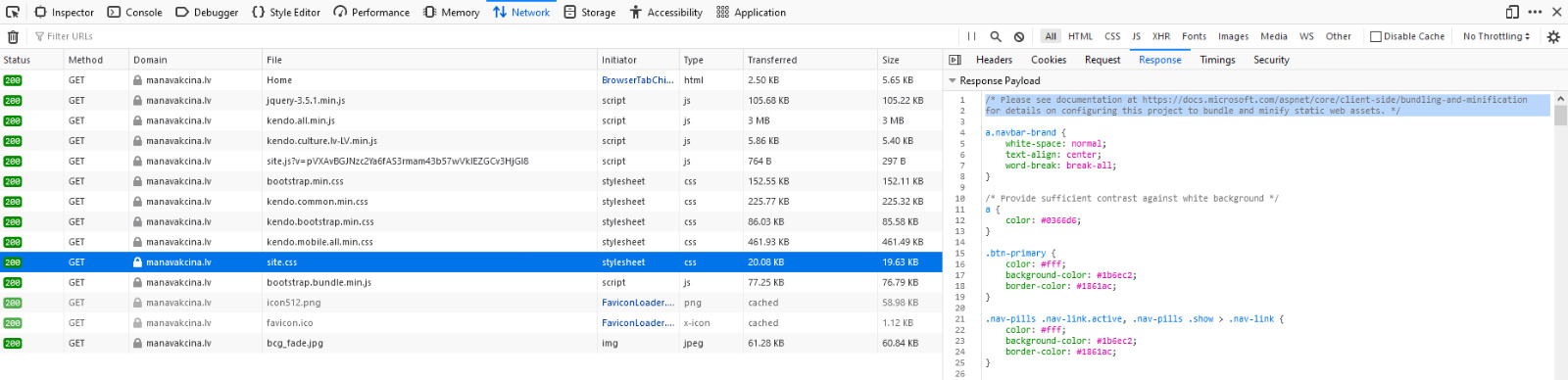

But surely, their site would be way better, right? Well, no, no it wasn't. At least the first version seemed like it was on track for becoming a pretty poor solution:

While the page looked decent enough, it was larger than 4 MB! In addition to that, the received and transferred sizes were the same, which implied that it used no minification of any sort. A cursory glance at the resources used by it, revealed this to indeed be the case. Funnily enough, the actual source code for ASP.NET Core gave instructions on how to do what they hadn't bothered to:

Not only that, but it seemed to use a trial version of Kendo UI. That's probably okay for testing, but smells like a big yikes if you decide to use unlicensed software in production on a governmental site. Software licensing matters, even if people oftentimes like to pretend that it doesn't and ignoring that probably wouldn't leave a good impression on the people actually using it, nor would it make you seem all that trustworthy (sans actual lawsuits):

Overall, it seemed like the site was on the path of becoming a technical failure, but surely things would get better, before it being made available in production?

manavakcina.lv, technical hurdles

Well, it actually did, but not at first. As a matter of fact, initially things just got worse. If you tried loading the page some time before it being published, you instead got a message from Cloudflare about the site being under attack:

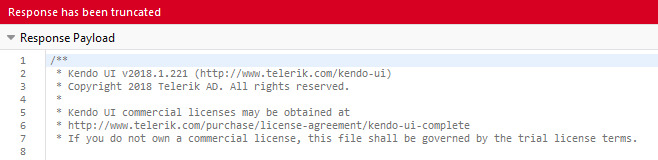

Now, at this point i doubt that it was actually under attack, because there really wasn't all that much to attack, but the users seeing messages like that probably doesn't inspire confidence either, especially if it could have just stayed a static "Coming Soon" page or something like that. Out of curiosity, i actually set up Zabbix web monitoring for the site, just to see how it performs over time, because the initial splash page was noticeably slower to load than my Apturi Covid page:

Sidenote: you probably should have monitoring like that for your own sites. Not seeing when your site is up and down, and not being able to track how its performance changes over time is pretty much unacceptable. You don't even have to use the super modern stacks of Grafana and Prometheus if you don't feel like it - even old fashioned Zabbix is perfectly good for this task. Personally, it definitely makes me feel safer in not missing outages (or not letting my clients be the first ones to find out about them), especially because most of these tools support integration with alerting systems as well. In my opinion, dashboards like that are a must:

It actually stayed that way for a few days! My hopes weren't too high at this point, but thankfully, it actually turned out better than i had expected!

The problems over at latvija.lv

And by that, i mean that it failed pretty predictably. It was made available for usage at around 10:00 in the morning, which is when we finally got to see the version of the site in production:

The actual production site was pretty good, but it didn't take long for things to go somewhat wrong. In this instance, the site itself held up pretty good, but the system that was used for authorization, latvija.lv, failed at doing the only thing that it should have been able to do in that context. As a result, its functionality was distrupted and both various media sources and people themselves soon reported that much:

Of course, it's actually good that many people wanted to get vaccinated, because at this case we have over 68k cases of COVID in our country and 1.25k deaths, which isn't as bad as in other places in the world, but still should be fought against with whatever "weapons" we have, vaccinations being a promising solution for this problem. Even Andris here attempted to put a positive spin on the situation here, but let me be perfectly clear - it's simply unacceptable for that system to go down, and it's embarassing that it did.

You see, there are lots of other governmental systems that use it for authentication and authorization, and as a consequence of this sudden load, their functionality was also disrupted, preventing many people from receiving the services that they need:

One might actually think that 3000 people per minute is a lot and that it's an unfortunate, yet understandable blunder, something that was caused by the unexpectedly high yield and that noone could have foreseen. Here, i completely disagree! Every system, as well as its integrations should be tested against either their production environments (inadvisable, yet sometimes unavoidable in our world of SaaS), or their staging environments, with data that's similar to the stuff you'd see in the production, running on similar infrastructure. This entire situation implies that they simply either didn't know how to do that, or didn't bother spending an evening or two setting up authentication mocks, or simply didn't have the support for testing that particular functionality in the first place! Having integrated with their services in the past, i can almost guarantee that it's mismanaged on some level and that the people in charge simply don't care about load testing and similar aspects of development as long as the system seems to work. Until it suddenly no longer does.

Besides, what kind of a number is 3000 people per minute? Try 120'000 people per minute! You see, for my master's, i actually developed a system and load tested it on both Docker Swarm and Kubernetes clusters, to see how well both of those technologies could support horizontal scaling and how well crashed containers would be rescheduled (well, the actual master's thesis was about developing a tool for automating the creation of container clusters, whereas this was merely the side effect of needing to test how well they performed). As a part of the testing, i slammed the servers with as much load as i could, just to see when they'd break:

Now, given that i rented just a few servers with measly 1 CPU core and 4 GB of RAM from Time4VPS (don't get me wrong, they're an awesome Lithuanian VPS provider, i'm just poor), the system's performance wasn't too stellar, especially because i (intentionally) wrote it in Ruby and Ruby on Rails to simulate quickly developed solutions with interpreted rather than compiled languages. Yet, it could process 2000 concurrent users, each sending requests every second, that need to hit a DB, look up data with JOINs and indexes, as well as write some data back into it on the validations succeeding.

In case you're interested, here's a table with some of the aggregated results, showing that those instances were capable of performing anywhere from 300'000 to 500'000 requests over the span of 30 minutes, with over 90% of them succeeding in every test scenario (even when the applications were configured sub-optimally or Kubernetes was trashing the weak CPUs):

Let me get that straight: i, just a student, was able to create a system which could deal with a far greater load for under 50 euros a month? As opposed to a governmental organization being unable to scale their system to deal with a few thousand users, their budget dwarfing anything i could ever dream of (helpfully, a news story about them was on in the TV in the background, which said that running their system costs 8000 euros per month, which comes out to me being 160 times more efficient)? Maybe i should take over the system instead, spend my 50 euros and pocket the rest 7950? I don't know about you, but that smells like incompetence to me, and others have also taken notice:

Funnily enough, the system itself knew what's up:

That's pretty bad indeed! And kind of embarassing, to be honest. No, not just because they were unprepared for this, but also because there are other concerns at play here. The system being so frail and yet so widely integrated implies that some script kiddie with a few thousand dollars in Bitcoin to spare could absolutely cripple many of the Latvian governmental systems! Are they even using CDNs or points of ingress with DDoS protection? It wouldn't surprise me if they don't!

Actually, glancing at their site now implies that they're running on Windows based infrastructure with IIS and ASP.NET (not even Core) as the backing service (of course, the tool also indicated that there's React and jQuery there, which, short of a really odd micro front-end setup, would be pretty weird):

No offense to Windows Server, but in my subjective experience it might serve as a red herring here - an indication that they probably haven't invested an awful amount of resources in scaling and perhaps aren't entirely up to date with orchestrating horizontally scalable systems. Heck, maybe not even Docker and Kubernetes, but just Apache Mesos or similar solutions. Of course, i could just be wrong, but then again, the situation itself doesn't inspire much confidence.

The Latvian government just generally is bad at scaling

That's about as bad as that one time when the VID EDS taxation system didn't work because it couldn't scale:

I remember a government official claiming that making the system work under those circumstances would be "too expensive". No, no it wouldn't be too expensive - it would simply require you to have read about horizontal and vertical scaling and implement solutions for problems that the industry has largely solved in the last 10 years, be it load balancing, database clustering, event based systems with queues, or anything else, really.

The fact that you think that 30'000 concurrent users is a big problem simply highlights the fact that you haven't been up to date with the development practices since the early 2000s. It's not okay for software to roll over and die if you have 10k, 20k, 30k or a 100k concurrent users in a country with 2 million people in it. You absolutely do not need to be Google to cope with loads like that. If your services are resource constrained and you cannot scale, you should have at least rate limits in place. If your system is distributed and certain cascading failures happen to it, it should still be able to at least partially continue working until the self-healing mechanisms take over, provided that you have any in place.

Actually, let me leave you with an excerpt of the 12 Factor App page, which is a wonderful guide for developing both containerized and non-containerized cloud native apps that are both scalable and fault tolerant:

manavakcina.lv, a game of patience

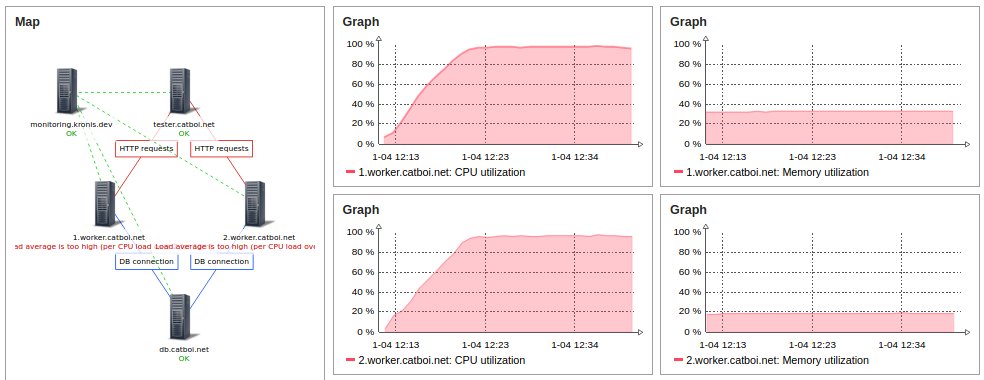

Okay, i'm almost done ranting about how bad things are. Before we get into the good stuff, let me share something about the actual manavakcina.lv site that irked me. After opening it, i was informed that i had been put into a virtual queue and would be served when the system would have enough compute free for it. Not a bad approach, i have to say, they even used Cloudflare as far as i could tell. The problem was with the numbers detailing how long i'd have to wait:

The waiting times varied from a few minutes to about 17 days in different browser sessions and on different devices, there being no rhyme or reason to them! Now, remember how i mentioned how the system should inspire confidence previously? Well, this doesn't!

In this instance, it would just be better to:

- show the user the average time that a person has to wait for getting service (say, over the last hour, with some placeholder value in place)

- or alternatively just lie to them and clamp the value to something reasonable - noone will wait for 17 days just to register on some site

Lobby based video games have successfully been doing this for ages and it's not even that difficult! Just something like a simple clamped average:

SELECT MIN(MAX(AVG(user_wait_time), 1), 30)

FROM user_session_statistics

WHERE user_session_created_at > NOW() - INTERVAL '60 minutes';A tad funny, but overall their attempts at implementing a queue were pretty decent! Though on the other hand, the users might as well have entered their data in a browser-based client-side app and then just submitted it to something like RabbitMQ or Apache Kafka and then we wouldn't even need the waiting in the first place. But i guess all of that wouldn't matter in this particular case, given that authorizing against latvija.lv needed to be done first due to the architecture of the system. Kind of unfortunate, actually, which is why you definitely should load test your systems!

manavakcina.lv, a look at the actual site

Now, let's suppose that you successfully authenticate with your bank:

And that all of your data goes through. In a word, it just works:

What are you left with? After all of the aforementioned blunders, is the site actually any good, or is it also a disappointment? Actually, i would have to say that it's pretty good! Even before you authenticate in the system, you see the start page which informs you about some of the common questions and it's good. It is simple, clear and concise (though it could have been shown while you're in the queue):

Both the UI and UX are completely acceptable! But not only that, gone is most of the bloat and it is even smaller than the Apturi Covid page, which it should be, given that it doesn't need fancy images or even that many scripts:

(that last one actually makes me think that i might have messed up the caching on Apturi Covid, learning about which is neat, especially because these guys didn't)

The overall decentness of everything does extend to data entry and submitting it. All of it is just usable and pleasant to use. No heavyweight pages that are slow to load, no broken UI, no other weirdness. Just a somewhat minimalist data-oriented page, as governmental pages should be:

Whoever worked on this bit, good job! In contrast, maybe even the whole latvija.lv site should strive to be this clear and usable. Of course, one could claim that this site didn't even need to do all that much and therefore the point is moot, but i disagree - it's good at what it does and it doesn't make using it difficult, which is pretty good as far as both UI and UX are concerned! In addition to that, i actually didn't have a hitch with receiving a notification from them to both my e-mail and mobile phone through SMS:

Now, i indicated a bunch of e-mail addresses and it seems like i only received it to one of them, but i guess they didn't want to overload their mail servers, which is understandable. Plus, the messages themselves were pretty clear as well! Even other people were no longer able or willing to complain about the design of the page that much:

I don't know about you, but when the worst things that can said about a system are nitpicks, i think it indicated that you're definitely headed in the right direction! Well, if not for the embarassing integration situation, which should have been caught whilst load testing the system. That was a bit of a bummer.

manavakcina.lv, nipicks

Of course, there are some nitpicks. While there is a minified JS bundle, i also noticed some rather interesting code, such as:

In addition to that, i noticed that there is still some unminified code in the source of the page, such as the bit for getting the available vaccination places:

Not only did those boys not follow the RESTful API conventions, but they also didn't stick that endpoint behind any sort of authentication, which may or may not be a small oversight. Also, there is some more unminified jQuery code in the page for some reason, but i guess they might just have been strapped for time and didn't think to put it into a separate file, which is why it could be stuck here:

JavaScript ate everyone's lunch

One thing i'd like to also bring up, if a bit unrelated, is how the site breaks if JavaScript is disabled. You actually can't click on any of the dropdowns to see their informative messages, a problem that was also shared by Apturi Covid, because it wasn't server side rendered and instead was only pre-rendered. I don't expect that many people in 2021 to have devices which do not support JS and to stumble upon that many people who have decided to disable it for privacy or ideological reasons, but even the latvija.lv site itself needs JavaScript to function.

This is actually what happens if you try authenticating with it if your browser's JavaScript is disabled:

One might chalk it up to SEB bank's adapter code not working well enough, but it doesn't even matter. Someone, somewhere totally overlooked this group of users and decided not to even provide a warning message if JavaScript is disabled. That means that they didn't even attempt to address this problem, or worse yet, didn't even think of it.

It's sad to see the world headed in this direction, especially since SSR still isn't universally supported or even desired by many of the available frameworks such as React, Angular and Vue. There is Next.js, Nuxt.js and other projects, but they seem more like patches upon technologies that are unsuited for it at a conceptual level. I think that it's fair to say that JavaScript is absolutely a requirement in our modern web, something that definitely shouldn't be, especially for government sites. Before long, you won't even support ES5 and other older language versions of ECMAScript, which will cripple older devices, much like the Android root SSL certificates expiring. This isn't even planned obsolescence - it's killing off of older devices due to ignorance and short-sightedness.

Conclusions and the aftermath

But back to the site in question, what's my verdict?

Overall, it was pretty okay in the end:

There's even a saying that one could use for this situation: "All is well that ends well."

Except, not quite.

You can't just run into a situation like this, shrug and say something along the lines of "Oh well, these things happen." and continue on your merry way without having learnt a single thing. Situations like this should have blameless postmortems done, with root cause analysis not being forgotten about, either! I really hope that they'll be responsible and publish something along the lines of that!

Of course, i almost certainly know that nothing of the sort will be done, but it should - every incident like this should result in a clear action plan, of how to prevent situations like this in the future and what was learnt from them. Here, let me go ahead and do the government's job for them, and provide you with a short list:

- before going into production, you need to test your system, but giving it to 1-100 testers isn't enough

- you need not just unit tests, integration tests, end-to-end tests, $FANCY_TEST_METHOD tests, but also load tests

- these load tests should also concern integrations of your system - all of the services that it needs to use to be operable

- this implies that the systems that you integrate with should have either sandboxes or staging/test environments that you have access to; anything less is unacceptable

- you shouldn't run government infrastructure that is not scalable, you should make those who are doing this answer for the results of their actions

In summary, this is one of those cases where everything worked out in the end, but there are definitely things that should be learnt from it! Lastly, i'll just leave you with the open source load testing framework that i used for load testing my COVID tracking simulation: https://links.kronis.dev/xqHxKVVWus

Compared to many other testing tools, it's programmable, relatively easy to use and automate, as well as proved to be adequate for my needs. Of course, there were also negatives, such as its JS engine being sub-par (since it's implemented in Golang for performance, rather than running a full Node.js or V8 instance) and even if it had certain optimizations, it was still pretty memory hungry - 2000 users took up almost 8 GB of RAM, which comes down to about 4 MB of memory per virtual user with a few variables stored in the memory for each of them. Are there better tools? Yep, but in lieu of knowing about them, start with whatever's decently popular and is good enough for your needs. Writing basic load tests actually only took me a few evenings.

Disclaimer

After finishing the work on the Apturi Covid page, all of the rights and the management of the actual system have been handed over to SPKC. Given this, the copyright situation is pretty clear, which is why i only use publically available information about the system (or things that you could figure out with Wappalyzer or similar solutions).

Other posts: « Next Previous »