Moduliths: because we need to scale, but we also cannot afford microservices

Date:

So, let's get one thing straight: if you're working in ICT, you're probably not in FAANG. Unless you are, in which case, good job at getting there! But for the rest of us, that's somewhat unlikely. Let's look at how many employees those companies have:

Now, it's pretty obvious that not 100% of those work as software developers, so perhaps that data isn't quite as useful to us, but for some back of the napkin maths, let's assume that 50% of all of those are keyboard toting software warriors. So, ignoring that Amazon's number of devs is probably way too high (since they do have a whole bunch of warehouses as well), we'd end up with a half of 1 312 230 employees or 656 115 heads. To make it even more simple, let's say there's 500 000 devs in FAANG.

So how many software developers are there in the world? Approximately 26 400 000, according to some sources. So that means that approximately 1,9% of all of the devs work in FAANG. In other words, in a room of 100 people, about 2 of them will care for the scale of projects that these companies are familiar with and will have to pick solutions to meet their demands.

We're mere mortals

And as for the rest of us? Smaller scales. Smaller budgets. Smaller (and hopefully simpler) systems. So perhaps you don't necessarily need all of the shiny toys that the giants use. Not because the benefits of Kubernetes, Istio, Kiali and Vault are not plentiful. Not because running all of your components on AWS isn't nice.

But simply because you can't afford it. You don't have limitless funds to throw at problems until you can pretend they don't exist. You don't have a seemingly limitless capacity to throw people and time at problems until they crumble under the weight of their collective effort and man-years spent on them. Instead, you probably have about 5 or 6 guys (or gals) you can have work on a project for a client and they need to knock something usable out in a few months.

So, let's suppose that you do manage to successfully choose the right tools for the job and manage to create something successful. Let's say that you have a containerized app that uses Docker Swarm with Portainer for orchestration that won't make you go bald and also frees you from the shackles of updating your JDK version and poking Tomcat every few months until it starts to work like you want it to. And this monolithic app of yours is built with something like Spring Boot and Java, both reasonably good choices. Maybe you're even running it in a reasonably priced data center somewhere, something like Time4VPS (affiliate link because of no ads on the blog, which itself is running on their VPSes) with reasonable self-service capabilities, instead of having to beg some admin for a VM.

And it even seems to work! Until, one day...

Even mortals need to scale

Your clients call you and tell you that the app is crashing. It got linked on Reddit or Hacker News, or Facebook and got lots of traction for whatever reason and now the servers are crumbling under the weight of repeatedly being slammed with request after request. All of the sudden, the single instance of your monolith cannot handle the load!

Because you didn't have the capacity to make your development last 2x-4x longer to develop everything as individually scalable microservices, you can't just change some parameter and have 10 instances instead of 1 running. One option is to simply scale up instead of out - making the singular instance of your application bigger, adding more CPU cores, more RAM, mode disk space. But even that has its limits - eventually running on a single instance will be risky. Will you be sure that you cannot saturate the network under peak load? Will you be sure that crashes of the single node won't result in site-wide outages? Don't be silly, of course they will bring everything down!

It might not even be a problem of provisioning new infrastructure, something that's actually pretty easy to do, even with Time4VPS. It might not even be a problem of not being able to easily orchestrate scaled deployments - even Docker Swarm makes replication easy! Instead, your application simply isn't scalable because of how you wrote it! Let's have a look at some of the common problems.

Data that should be shared will be local

Suppose that we decide to run 2 instances of the monolithic app in parallel. We don't have sticky HTTP sessions, so the user's requests might randomly end up being processed by either of these instances. And even if some smartypants were to suggest that modern load balancers make implementing sticky sessions easy, suppose a instance crashes, the effect will be the same. What i'm talking about here is that data which should be shared amongst these instances instead will be local to each of them, separately.

So an user might log in to instance #1 but when their requests will end up with instance #2, it will complain that it has no idea who they are and will deny their requests. So, suppose that you opt to keep this data in an instance of Redis, something that seems pretty well supported, right? Well, what about when your app has sagas that span across more than one network request?

Suppose that you use server side rendering, with something like JSF and PrimeFaces, and a user opens some form of your site, but when they attempt to press a button and an AJAX request sends the data to the server, our instance #2 will realize that there is no state about the open form or the button and it will fail. It doesn't seem like there is a solution for this, except for writing your front end as a separate app with something like Angular/React/Vue and using RESTful APIs for making requests that don't need additional state to be processed, or simply using server side rendering with completely stateless requests.

So, as long as you're aware of these pitfalls at the start of developing your monolith (and actually test it with multiple parallel instances ahead of time, for whatever reason), it seems like you can perhaps get away with scaling it up horizontally, can't you? Well no, that alone is not enough...

Scheduled processes will conflict

Suppose you have a process, which updates some user's data or perhaps sends e-mails every hour. If you simply scale your monolith, you might discover that your users receive double e-mails and some of the data after these batch processes in the DB is plain wrong, especially if you have stored procedures or incremental actions as well!

Even if you attempt to put your code inside of a synchronized block or attempt to implement some locking, that will do precisely nothing for the root cause of your problem - the fact that the instances aren't delegating which of them will handle a specific task for which data. However, if the amount of data is small enough to be processed by one instance, you might avoid these problems by making sure that only one instance runs the process, while others handle serving rest requests and the other stuff.

Hmm, specialized instances of your app that serve a specific role? That actually does sound an awful lot like microservices? So, why didn't we use them in the first place?

Microservices suck

Microservices suck, like they really suck. All of the sudden, you need to not only talk with the front end and parse all of the requests, serialize/deserialize the data, validate it and send responses back to the client, but you need to do that even between the internal components of your infrastructure. The painful bits here are the fact, that 90% of the time you'll probably use REST for doing that, which doesn't always translate nicely for making an app do something, because instead it forces you to think about resources.

So why not use gRPC? Because it's not awfully comfy to have to generate stubs, think about protobufs and channels and write bunches of boilerplate code to get something that essentially amounts to calling a simple method. Sure, if you have a polyglot architecture, it's one of the few solutions that sorta make sense, but what if we want to do something simple between 2 Java instances, without attempting to put method calls in the DB or use some overcomplicated enterprise message bus?

Why can't we just do this?

public void doSomething(DomainObject objectToProcess){

...

try {

OtherDomainObject processedObject = processDataInExternalSystem(objectToProcess);

} catch (CrappyNetworkException e){

logger.error("Distributed systems fail all the time!", e);

} catch (ExternalValidationException e){

logger.error("The data we passed to external service was faulty!", e);

}

...

}

external OtherDomainObject processDataInExternalSystem(DomainObject objectToProcess) throws CrappyNetworkException, ExternalValidationException;Because the people who design programming languages have decided that implementing logic to deal with distributed systems at the language construct level (the fake external keyword above which would let us implement an interface that would be run by a different service, probably based on some eldritch XML configuration logic) isn't worth it and have left us to patch something like that in at the framework level, to less than stellar results.

But wait, it gets worse! Suppose that passing data around and authentication isn't a large problem! Soon you'll run into the problem of following microservice "best practices" and having each of them have their own instance of a DB. Welcome to data consistency hell! You'll have no good ways to make foreign keys across different DB instances and good luck when you inevitably try feeding them in some visualization program like DbVisualizer, just to discover that it won't really help you that much, because it literally can't work with distributed data like that!

Well, okay, suppose we opt to ignore those supposed best practices and go with a single monolithic DB, since sharding and replication are too hard anyways, as is managing multiple DBs. Or maybe we use something like TiDB to attempt to handle most of the difficult stuff for you, until the leaky abstraction folds and fails in front of you. Then, a different problem presents itself.

With microservices, you are no longer running a single app instance, but multiple ones. Instances, each of which probably needs different configuration and different parameters to start. Each of which will probably do stuff similarly enough to other instances for it to seem inconspicuous enough, until at some point in time the differences will present themselves and everything will go wrong. Maybe someone will change some bit of configuration for the Spring Boot embedded tomcat for 1 service out of 5? Or maybe they'll change it for 4 out of 5 and will forget about that last one? And 12 Factor Apps won't help you here, because we're not talking just about the configuration that's passed into the service, but the logic behind how that service will interpret that configuration and what it'll do with it. Or maybe you'll try to share all of the configuration as a Java library since after all it is common code, just to grow gray hairs of old age because of how much time managing that will take? Or maybe you'll attempt to create a service that will provide configuration for other services, thus making everything worse in the process because of the added complexity?

Gee, it seems like you just can't win with microservices, and i haven't even gotten into debugging yet! Heavens, imagine debugging not just race conditions and byzantine failures across the threads and async processes within your app, but multiple networked services where oftentimes you won't be able to put in a breakpoint and see the full picture. Or you'll need to spend a bunch of minutes starting a variety of services just to test why one field in your object is null in some super specific circumstances. Maybe you should just add OpenTracing to your project, once you actually realize what it is after wasting even more time? I'm guessing no, since you're already running late at this point.

The short story here is that everything that can go wrong here, will. Unless you are FAANG, think long and hard about doing microservices. So what can you do?

Meet The Modulith: the modular monolith

Take the concept of a mono-repo one step further and apply DRY to entire projects! Do what you've been doing up to this point, write monoliths! But not just regular monoliths, the kind that actually contain multiple services. Services that can be toggled on or off with feature toggles, perhaps as simple as environment variables if you'd like.

So now, all of the sudden, your services with their corresponding configuration can look like the following:

serve_users_1:

HOSTNAME=https://my-app.com

FEATURE_FRONT_AND_BACK_END=true

serve_users_2:

HOSTNAME=https://my-app.com

FEATURE_FRONT_AND_BACK_END=true

background_functionality:

HOSTNAME=https://internal.my-app.com

FEATURE_SEND_EMAILS=true

FEATURE_SCHEDULED_PROCESSES=true

external_integration:

HOSTNAME=https://api.my-app.com

FEATURE_EXTERNAL_SERVICE=trueAnd just like that, you can have as many instances of your service as you have the money and the need for. And you can easily store all of the data in a single DB and every instance will be aware of both the data in the DB, as well as will have internal access to all of the functionality that exists. No more awkward shuffling around with data or worrying (as much) about race conditions. No more struggling with bunches of different code deployments, sure, the configuration will differ a little bit for which of the features are enabled, but that'll be pretty minimal.

Of course, you'll need to think a little bit about how to only activate the modules that you actually need and there will inevitably be at least a little bit of overhead, but it'll still be comparably way less worse than running a bunch of different services.

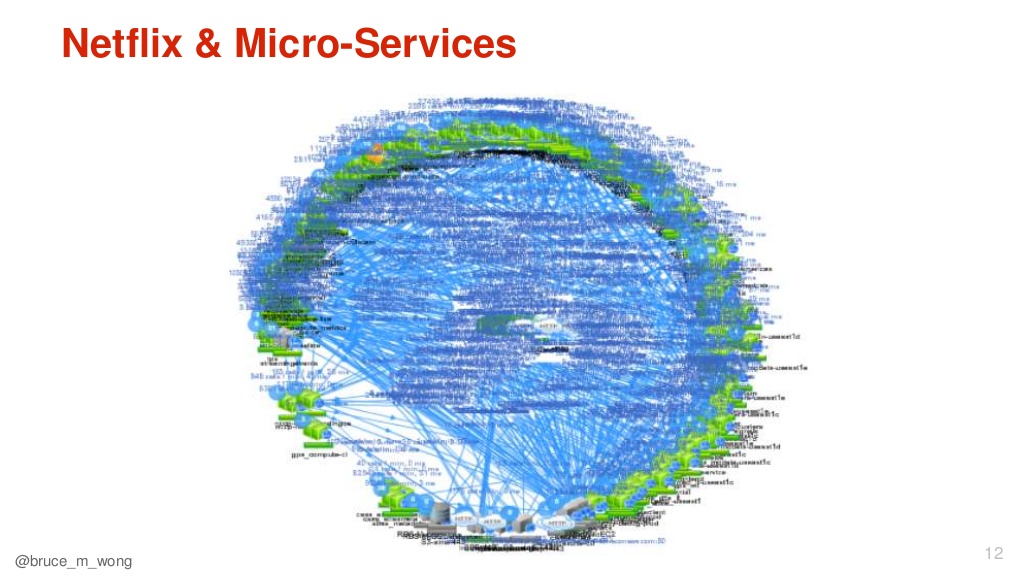

But you'll probably ask: "But if we put all of our domain code in a single codebase, won't it become a 5 million line of code monster that noone can work with?" Eventually, sure. But i'd argue that it's a little bit better than having 10 similar projects with 1 million lines of code each that basically do the exact same thing. Just look at how the microservices of Netflix look:

Do you want to live in a world where you need to work with that? I don't.

And should i ever have requirements that have outgrown the scope of the single project, then i'll simply compose multiple of these moduliths and essentially end up with macro services. You know, basically what folks used to do back in the day, before microservices were even a thing.

Moduliths in the wild

Actually, what i'm describing isn't even that unusual or that much of a novel idea, save for the unusual name.

The best example that i can think of, that's used by a large amount of people in production, is GitLab Omnibus. It's an installation of GitLab which packages all of the services that are required to launch it, and some that the users could want to enable later. In practice, this works wonderfully - no longer do you need to manually configure a database, or an instance of Redis, or the GitLab Registry for storing container images.

It becomes as easy as toggling a configuration variable and since this Omnibus release has been tested, it also appears to be more stable. It's no longer likely that you'll manually install the wrong release of PostgreSQL or misunderstand how the Registry should integrate with the rest of GitLab. And if you don't want to launch those services, they won't really take up that much of your time. And if you want to run them on another machine? Just run another instance where you enable only those other services!

Actually, that makes me hope for a day when relational databases will be truly distributed, like TiDB attempts to do, and we'll be able to deploy the front end, back end and even an instance of an application's DB (or a small part of it, anyways) together as a single computational unit. Of course, one can argue that distributed systems never should end up this way, but if it's worked for years for desktop software, i don't see why server software should be that much harder. I mean, it works for distributed file systems like GlusterFS and Ceph!

Other posts: « Next Previous »