DIY surveillance: motion detection with OpenCV and Python

Date:

So, a little while ago i decided that it would be a good idea to see if someone drops by my dorm room while i'm not there. Now, normally i lock the door so there's not too much to worry about, but in this particular case i was waiting for a scheduled room inspection by the university.

Since my servers are already on most of the time (save for them being set up to turn off for a few hours with rtcwake so i can sleep without being disturbed by the PSU noise, given that i could replace the CPU fans with passive cooling heatsinks, but not the PSU ones) and i have a webcam laying around, i figured that i'd put 1 and 1 together and see what i can cook up.

The script and the idea behind it

You can actually find a lot of examples of using OpenCV online and i also based this script on one of them, though adjusted it to working in the background all the time as a service/container.

The idea was pretty simple: every 30 seconds start a new video. At the start of the video, take a reference image and during it, compare each Nth frame (not to cause too much CPU load) against the reference one and figure out if anything has changed. If it has, keep the video after it is finished. If not, delete the video and let the process continue.

I have the script up on my GitLab, but since i'm not publishing the full repository yet (since it includes GitLab CI stuff and Swarm redeploy hooks etc, since i'm lazy like that and don't want to specify everything CI needs both in the .gitlab-ci.yml file AND build environment parameters is something that i don't have the time for), here's the script in its entirety:

import cv2

import time

from datetime import datetime

import os

import platform

def env_or_default(default, environment_variable, integer=False, boolean=False):

value = None

if environment_variable in os.environ:

value = os.getenv(environment_variable)

else:

value = default

if integer:

return int(value)

elif boolean:

return bool(value)

else:

return value

BLUR_RESOLUTION = env_or_default(32 + 1, 'BLUR_RESOLUTION', integer=True)

THRESHOLD_VALUE = env_or_default(48, 'THRESHOLD_VALUE', integer=True)

CONTOUR_AREA = env_or_default(64 * 64, 'CONTOUR_AREA', integer=True)

CAPTURE_LENGTH = env_or_default(30, 'CAPTURE_LENGTH', integer=True)

TARGET_FPS = env_or_default(5, 'TARGET_FPS', integer=True)

SHOW_IMAGES = env_or_default(False, 'SHOW_IMAGES', boolean=True)

TIME_START = env_or_default('09:00', 'TIME_START')

TIME_END = env_or_default('20:00', 'TIME_END')

def time_in_range(start, end):

now = datetime.now().time().replace(microsecond=0)

start = datetime.strptime(start, '%H:%M').time()

end = datetime.strptime(end, '%H:%M').time()

in_range = None

if end <= start:

in_range = now >= start or start <= end

else:

in_range = start <= now <= end

print('Start: ' + str(start) + ' Current: ' + str(now) + ' End: ' + str(end) + ' In range: ' + str(in_range))

return in_range

# https://levelup.gitconnected.com/build-your-own-motion-detector-using-webcam-and-opencv-in-python-ff5bdb78a55e

def process_video():

first_frame = None

video = cv2.VideoCapture(0)

if not video.isOpened():

print("Error starting video capture!")

fps = video.get(cv2.CAP_PROP_FPS)

width = int(video.get(3))

height = int(video.get(4))

print("Frames per second: " + str(fps) + ", resolution: " + str(width) + "x" + str(height))

if (platform.system() == 'Windows'):

fourcc = cv2.VideoWriter_fourcc(*'XVID')

else:

fourcc = cv2.VideoWriter_fourcc(*'MJPG') # nothing works, even installing x264 and using X264 as the fourcc is broken

date_format = datetime.now().strftime('%Y-%m-%d_%H-%M-%S')

video_filename = os.path.join('videos', date_format + '.avi')

print("Video filename: " + video_filename)

video_out = cv2.VideoWriter(video_filename, fourcc, TARGET_FPS, (width, height))

frame_write_mod = fps / TARGET_FPS

if frame_write_mod < 1:

frame_write_mod = 1

init_frames_skipped = 0 # we need to skip a few frames right after the camera initializes so the colors are stable

video_frames = 0 # count total frames to figure out which ones need to be saved, which skipped (not recording at full framerate)

is_motion_detected = False

while True:

check, color_frame = video.read()

video_frames += 1

if video_frames % frame_write_mod != 0:

continue

if init_frames_skipped < TARGET_FPS * 2:

init_frames_skipped += 1

continue

grayscale_frame = cv2.cvtColor(color_frame, cv2.COLOR_BGR2GRAY)

grayscale_frame = cv2.GaussianBlur(grayscale_frame, (BLUR_RESOLUTION, BLUR_RESOLUTION), 0)

if first_frame is None:

first_frame = grayscale_frame

continue

delta_frame = cv2.absdiff(first_frame, grayscale_frame)

threshold_frame = cv2.threshold(delta_frame, THRESHOLD_VALUE, 255, cv2.THRESH_BINARY)[1]

threshold_frame = cv2.dilate(threshold_frame, None, iterations=0)

(contours, _) = cv2.findContours(threshold_frame.copy(), cv2.RETR_EXTERNAL, cv2.CHAIN_APPROX_SIMPLE)

for contour in contours:

if cv2.contourArea(contour) < CONTOUR_AREA:

continue

is_motion_detected = True

(x, y, w, h) = cv2.boundingRect(contour)

red = (0, 0, 255)

cv2.rectangle(color_frame, (x,y), (x+w, y+h), red, 2)

if SHOW_IMAGES:

cv2.imshow("Gray Frame", grayscale_frame)

cv2.imshow("Delta Frame", delta_frame)

cv2.imshow("Threshold Frame", threshold_frame)

cv2.imshow("Color Frame", color_frame)

video_out.write(color_frame)

video_seconds = int(video_frames / fps)

if video_seconds > CAPTURE_LENGTH:

break

# actually, since we want to run the script on Linux, we're disabling the below, because it's not included!

# cv2.error: OpenCV(4.4.0) /tmp/pip-install-ofq0plna/opencv-python/opencv/modules/highgui/src/window.cpp:717: error: (-2:Unspecified error) The function is not implemented. Rebuild the library with Windows, GTK+ 2.x or Cocoa support. If you are on Ubuntu or Debian, install libgtk2.0-dev and pkg-config, then re-run cmake or configure script in function 'cvWaitKey'

#_ = cv2.waitKey(1) # oddly enough, we don't actually care about the key, but there is no cv2.wait command and it freezes without it

video.release()

video_out.release()

print("Keeping video: " + str(is_motion_detected))

if not is_motion_detected:

if os.path.exists(video_filename):

os.remove(video_filename)

if SHOW_IMAGES:

cv2.destroyAllWindows()

def main():

print("webcam-motion-detection has been started!")

while True:

should_run = time_in_range(TIME_START, TIME_END)

if should_run:

process_video()

else:

time.sleep(CAPTURE_LENGTH)

main()One of the first things that i did, was carry out many of the parameters as something that can be customized, either directly in the script, or passed in as an environment variable. This proved to be useful later, when i discovered that only the MJPG codec worked on Linux, which has horrible file sizes, so i had to decrease the framerate.

Apart from that, the logic uses a few images to figure out whether motion has taken place:

- full color image - what will have rectangles applied on it and saved in the video

- grayscale image - converted to only have one intensity value per pixel, easier to process

- blurred grayscale image - since the camera is really bad and jittery, the image is blurred to attempt to compensate for this somewhat

- delta_frame - contains the difference between the first frame for the video and the current frame

- threshold_frame - contains bits that have changed enough to be logged as detected changes, to ignore noise

After we get the threshold image, we find where the contours are apply them on the main frame in the video. This is useful for seeing what has caused the changes, as well as debugging. One oddity that you might notice is that if there's a "dynamic" object at the start of the video, it will actually be used as the baseline, therefore it moves out of the view, its absence will also be marked as a change, which might seem a bit silly when an empty space is pointed out.

Here's a quick example of an early version of the script (with slightly different parameters) showing it in action:

Also, you can change how small the detected objects will be, which is why my fingers don't show up as marked rectangles in the video.

An example of the script running in the background

And here's an example of a full video that was saved by the script running in the background on the server, chronicling me getting ready to go to the countryside for the weekend. Here you can see both how poor and motion blurry my camera is, as well as how many smaller elements are logged as changes, including even a shadow on the wall:

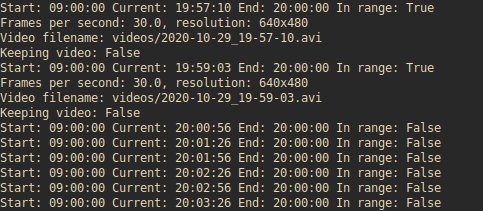

And there's even a little bit of logging, which logs every attempt to film something and whether the video file was kept:

Another addition that i made was adding logic to not run the script outside of specific hours, both because the camera would generate useless noise at night which would just fill up my HDD with no good reason and also because i don't want pictures of ghosts:

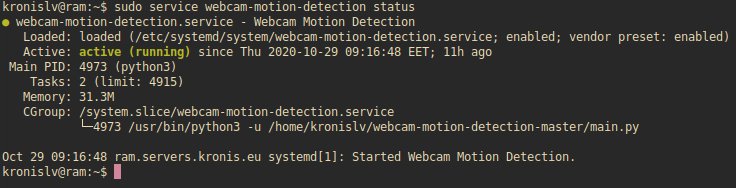

Now, in my case, the videos themselves were saved in the .avi format which resulted in pretty low encoding load on the system, but pretty big file sizes. That said, i'm pretty happy with the overall resource usage of the script and how it integrates with systemd:

The problems

The most problems that i ran into were actually caused by OpenCV and its oddities. As a matter of fact, there were so many broken things, that i made a separate post here: OpenCV (with Python) is broken

I actually had to mess around quite a bit with the systemd to get it working properly as well:

[Unit]

Description=Webcam Motion Detection

#Wants=network.target

#After=network.target

[Service]

User=kronislv

Group=kronislv

WorkingDirectory=/home/kronislv/webcam-motion-detection-master/

ExecStart=python3 -u /home/kronislv/webcam-motion-detection-master/main.py

StandardOutput=file:/home/kronislv/webcam-motion-detection-master/detection.log

StandardError=inherit

Restart=always

[Install]

WantedBy=multi-user.targetHere i specified the -u option for Python to output results to the logs instantly, so that i could check them with tail -f detection.log, but also had to specify the output as a file, because otherwise systemd would eat them up and i'd have to use journalctl to get them, lest they be lost forever.

Also, i actually specified the user and group, but for whatever reason the log file is still created under root:root which is stupid scary. Why don't i get an error for wrong config instead, if i've entered something wrong?

Also, i tried running it on Docker Swarm:

version: '3.4'

services:

detector:

image: registry.kronis.dev/smol/webcam-motion-detection/webcam-motion-detection:latest

volumes:

# - /dev/video0:/dev/video0

- detection-data:/app/videos

environment:

- CAPTURE_LENGTH=60

- TARGET_FPS=10

- SHOW_IMAGES=False

- TIME_START=09:00

- TIME_END=20:00

devices:

- /dev/video0:/dev/video0

deploy:

mode: replicated

replicas: 1

placement:

constraints:

- node.hostname == ram.servers.kronis.eu

resources:

limits:

memory: 128M

cpus: '0.25'

volumes:

detection-data:

driver: local

driver_opts:

type: none

device: /home/kronislv/docker/webcam-motion-detection/data/webcam-motion-detection/app/videos

o: bindBut that did not work because Swarm doesn't let you work with devices, it's actually described in more detail here: Docker Swarm access to devices is nonexistent

Other posts: « Next Previous »