How to publicly access your homelab behind NAT

Date:

After moving out of my university dorm room, i was met with an unwelcome surprise: my personal servers, that i had originally kept on my table in the dorms, were no longer accessible from the Internet, when i connected them to my home network. It appears that in the dorms i was assigned a public IP address through DHCP, which i could use for dynamic DNS and therefore host servers that i could access remotely, however now my servers were behind NAT.

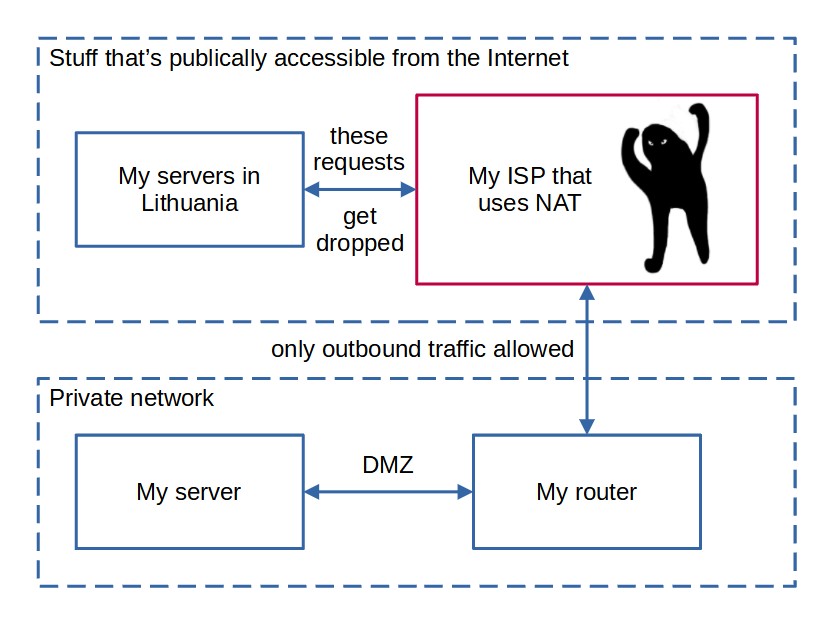

For example, let's configure a DMZ in the router settings:

Then, let's find out our public IP address (which changes occasionally, but should be good enough for pinging my server):

And finally, let's check whether it is reachable from another server, say, a VPS that i rent from Time4VPS:

Unfortunately, it appears that it's not reachable! So, why does this happen? Because the network is actually something like this:

Due to the shortage of IPv4 addresses, NAT is used to allow handling outbound traffic by remapping the IP address space and letting the ISPs hardware handle this. The downside, obviously, is that no device in the network gets an actual public IP address, thus, hosting servers on them becomes a bit of a problem. This is caused by the fact that some time ago someone decided that 32 bits, or a bit above 4 billion IP addresses surely would be enough for all of the computers in the future, right? In my opinion, this is just a sign of both the hardware at the time being weak enough for this to be an actual concern, as well as the fact that people oftentimes can't predict the scale of how popular their technologies will get. So, basically, due to a decision made in the 1982, we still suffer.

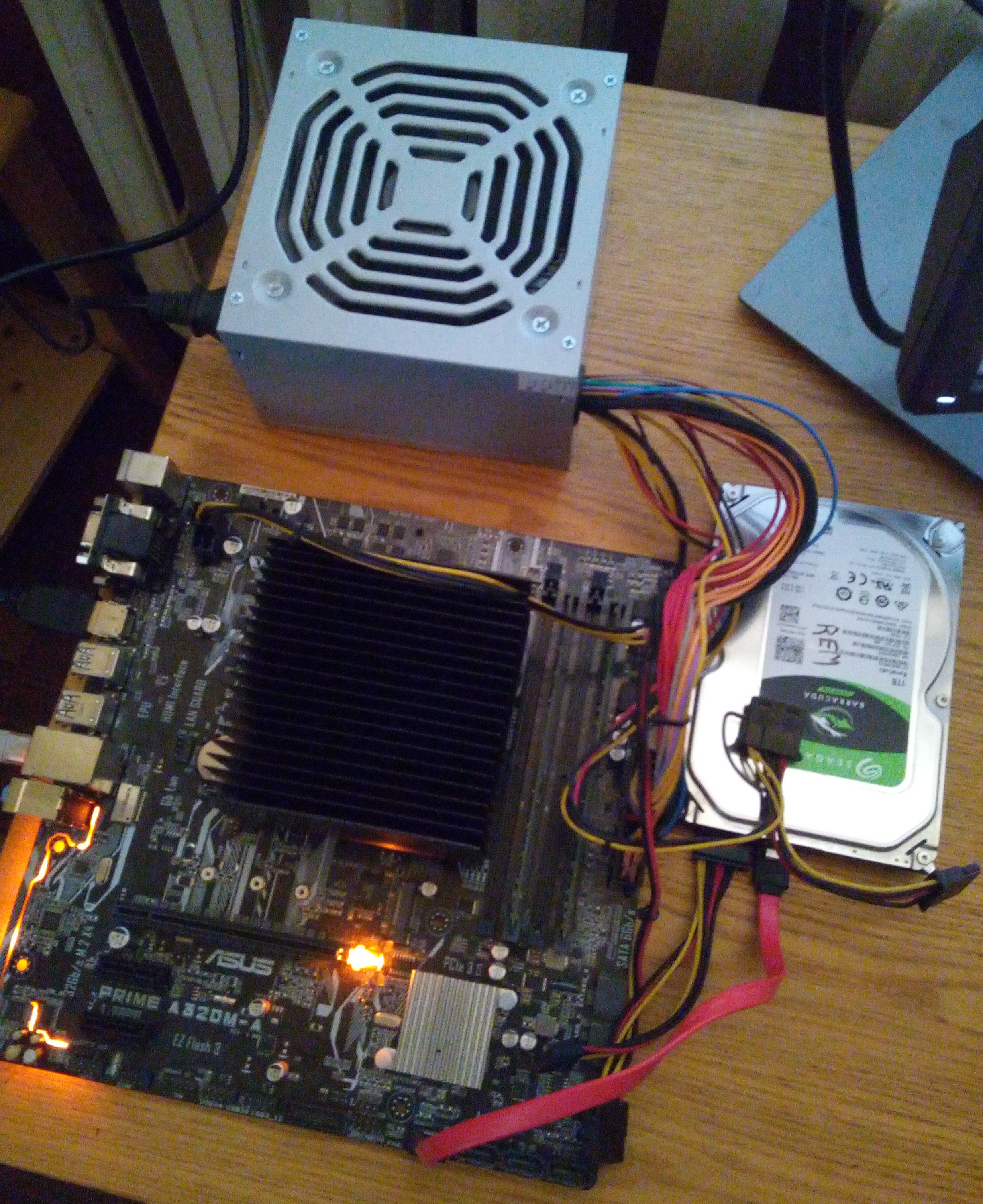

Besides, it's not like i need even dozens of addresses, just a few for my homelab, so it definitely feels more complicated than it should be, especially considering how simple my hardware actually is. Essentially i have a 200GE CPU that has a TDP of only 35W, has about 16 GB of the cheapest RAM i could buy and also a 1 TB HDD because i cannot afford any of the public backup services:

So what can be done about it?

Is it possible to work around this with the ISPs own services? Of course it is:

The ISP in question does offer static IPv4 addresses for about 6 euros per month, if you decide to use their business internet package, which is also a little bit more expensive than their regular one (and for some reason the speeds indicated are lower than those of the consumer package?). Now, IPv6 addresses would also be an option, since they only cost 1 euro, but sadly most of the residental networks in Latvia don't support IPv6 at all, nor did my university network!

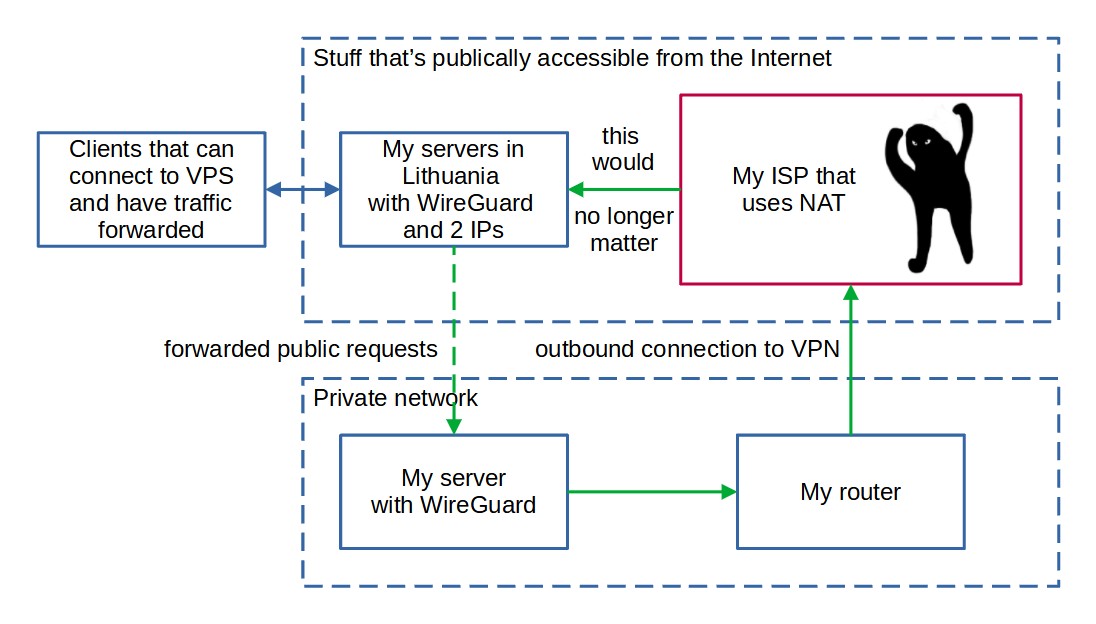

But still, why not just use the ISPs offering and call it a day? Mostly because i'm trying to minimize my homelab expenses due to the salaries here not being awfully good, but also because i'd like to explore alternative solutions which could work behind any ISPs network, without having to contact them and begging them for public IP addresses. In the past, i've run setups that use OpenVPN but now there is a supposedly better option - WireGuard. In such a VPN setup, i could forward all of the public traffic to one of the server's public IP addresses and then have it forward all of it to my local server, which would connect to the VPS by itself:

Now, this would require a bit of configuration, would use up a bit of the CPU resources (OpenVPN is especially bad at this) and would also increase both the ping times and generally would make the network saturation bigger, but for homelab scenarios it's definitely suitable! Furthermore, such approach would allow me to easily migrate between different ISPs in the future without much worries about the stability of this setup, because it would mostly depend on the VPS provider.

You see, the aforementioned hosting provider, Time4VPS allows adding more than 1 public IP address per server, at the cost of just... uhh, 20 euros per address!?

It was actually cheaper when i last checked, but it seems like the IPv4 exhaustion is affecting even them! That would still be cheaper than the ISPs offering after approx. 3 to 4 months of using it. Hmm, what other alternatives are there? Well, i could get one of their VPSes and use it to forward all of the traffic to my local server, but that'd also incur costs every month, that, while still lower than my ISPs offering would just be a waste of resources in general - it's not like i actually need a VPS with 2 GB of RAM to shuffle some network packets back and forth:

(that said, the servers are pretty awesome for general computing tasks and i use them for most of my infrastructure ❤️; feel free to give them a go)

Well, maybe i could just use an offering from another VPS provider? I am under no illusions that i can actually afford AWS, GCP or even Azure for any of my cloud services or homelab related stuff, but thankfully Scaleway has an offering called Stardust, which is indeed very cost effective:

The only thing i really dislike is how they obfuscate the monthly expenses and only show the hourly values (0.0025 euros per hour comes out to just under 2 euros per month), but even so, it would only get more expensive than Time4VPS offering after about 10 months of usage and has the benefit of the costs being dependent on the time that the instance was used for. Thankfully, they at least have a useful calculator down the page, which allows you to make sure that you're understanding their pricing model correctly:

This is good for a number of reasons. For example, if i try setting up WireGuard and discover that it simply won't work, then i can remove the instance after a day or two and pay only for that time, as opposed to Time4VPS which requires at least monthly commitment. Also, Scaleway actually charges your card automatically, as opposed to Time4VPS which requires manual press of a button on the bill online for them to charge you (which may be okay for annual billing as i do, but annoying for monthly billing).

You just need to be careful, since Stardust instances are pretty low on stock most of the time because of the large demand for them:

All of these complications would probably make some people question the feasibility of the entire setup and instead suggest that they look at a few simple SSH tunnels instead. That could work with a few ports, for sure, but in our case, i want ALL of the ports of the local server to be available - we're basically recreating a DMZ here, for convenience. So, in this case, instead of a server that has 2 public IPs, i instead settled on the Stardust instance, where i would forward all of the ports that i feasibly can, however apart from that the setup remains the same as in the image above.

Server configuration and setting up WireGuard

Now, the images above might suggest that you use Debian, but after some testing, it's probably a better bet to go with Ubuntu for the time being. In case you're interested to know what the problems with Debian are, you can have a glance at the chapter below. But for now, i'm describing the setup with Ubuntu.

First, we'll set up the only port that we won't be forwarding to the local server - the one that we need for SSH. Since we don't really care much for connecting to this particular server under normal circumstances, it's totally fine for sshd to be running under a non-standard port. In this instance, we'll be changing the port to 2222, which can be done by editing the "/etc/ssh/sshd_config" file:

Port 2222After which we restart the service:

sudo service sshd restartThen, all of the new connections need to go through the port 2222. Now, let's continue with installing WireGuard on both my local and this proxy server. With Ubuntu, there's no need to check the unstable package repostiories, WireGuard can be installed directly.

sudo apt update

sudo apt install wireguardAfter this is done, we can proceed with the actual WireGuard configuration. First, the stuff that's common with most configurations, we'll want to create private and public keys so that the servers can connect to one another securely:

sudo su

cd /etc/wireguard

(umask 077 && wg genkey > wg-private.key)

wg pubkey < wg-private.key > wg-public.keyNow we'll need to create the actual configuration for WireGuard in the "/etc/wireguard/wg0.conf" file (create it if it doesn't exist), first for the local server:

[Interface]

PrivateKey = LOCAL_PRIVATE_KEY

Address = 192.168.4.2

[Peer]

PublicKey = SERVER_PUBLIC_KEY

AllowedIPs = 192.168.4.1/32

Endpoint = SERVER_PUBLIC_IP:65535

PersistentKeepalive = 24And then one config for the remote server that has a public IP address:

[Interface]

PrivateKey = SERVER_PRIVATE_KEY

ListenPort = 51820

Address = 192.168.4.1

[Peer]

PublicKey = LOCAL_PUBLIC_KEY

AllowedIPs = 192.168.4.2/32After this is done, it is then possible to enable the WireGuard service on both the remote and the local servers:

sudo systemctl enable wg-quick@wg0

sudo systemctl start wg-quick@wg0

sudo systemctl status wg-quick@wg0So, at this point we have the WireGuard configuration files and after starting the service, everything appears to be working just fine:

So, after this is done, we should be able to access our local server from the remote one, based on the IP address that we chose for it in the configuration:

ping 192.168.4.2Which should successfully work now:

As a matter of fact, you can even log in to the local server from the remote one and check whether everything's in order:

Eureka! Now all that's left is to configure some IP table rules for forwarding the traffic from ALL of the ports of the remote server (except for the SSH port and the WireGuard port) to the local one.

Port forwarding

Now comes the part that i absolutely hate the most, because the IP tables in Linux are rather confusing and don't seem at all user friendly. Essentially, now we'll need to tell the firewall to forward all of the incoming packets either through UDP or TCP for all of the supported ports apart from the non-standard SSH one that we reserved on 2222 to the local server and the one that's needed by WireGuard itself, 65535 in this case. This will help us achieve the DMZ setup, since we're basically copying a small subset of a router's functionality in software at this point.

First, let's enable IPv4 forwarding for the OS itself, by editing the "/etc/sysctl.conf" file and adding the following line:

net.ipv4.ip_forward=1Then we can make the changes take effect by running the following and also make sure that it worked:

sysctl -p

cat /proc/sys/net/ipv4/ip_forwardAfter it appears to be working, we'll execute the following to set up all of the rules that we need, to forward all of the traffic for ports 1-2221 and 2223-65534 for both TCP and UDP to the 192.168.4.2 (our local server) and allow it to send responses:

# TCP rules

sudo iptables -A FORWARD -i ens2 -o wg0 -p tcp --syn --dport 1:2221 -m conntrack --ctstate NEW -j ACCEPT

sudo iptables -t nat -A PREROUTING -i ens2 -p tcp --dport 1:2221 -j DNAT --to-destination 192.168.4.2:1-2221

sudo iptables -t nat -A POSTROUTING -o wg0 -p tcp --dport 1:2221 -d 192.168.4.2 -j SNAT --to-source 192.168.4.1

sudo iptables -A FORWARD -i ens2 -o wg0 -p tcp --syn --dport 2223:65534 -m conntrack --ctstate NEW -j ACCEPT

sudo iptables -t nat -A PREROUTING -i ens2 -p tcp --dport 2223:65534 -j DNAT --to-destination 192.168.4.2:2223-65534

sudo iptables -t nat -A POSTROUTING -o wg0 -p tcp --dport 2223:65534 -d 192.168.4.2 -j SNAT --to-source 192.168.4.1

# UDP rules

sudo iptables -A FORWARD -i ens2 -o wg0 -p udp --dport 1:2221 -m conntrack --ctstate NEW -j ACCEPT

sudo iptables -t nat -A PREROUTING -i ens2 -p udp --dport 1:2221 -j DNAT --to-destination 192.168.4.2:1-2221

sudo iptables -t nat -A POSTROUTING -o wg0 -p udp --dport 1:2221 -d 192.168.4.2 -j SNAT --to-source 192.168.4.1

sudo iptables -A FORWARD -i ens2 -o wg0 -p udp --dport 2223:65534 -m conntrack --ctstate NEW -j ACCEPT

sudo iptables -t nat -A PREROUTING -i ens2 -p udp --dport 2223:65534 -j DNAT --to-destination 192.168.4.2:2223-65534

sudo iptables -t nat -A POSTROUTING -o wg0 -p udp --dport 2223:65534 -d 192.168.4.2 -j SNAT --to-source 192.168.4.1We should now be able to check whether it has been set up correctly, by attempting to access various ports on our local server through a remote one. In this case, we can use iperf, by specifying a particular server port and either TCP or UDP for it. Here, the first row in each pair corresponds to the server commands and the second row is the client commands:

sudo iperf -s -p 1024

iperf -c rem.servers.kronis.eu -p 1024

sudo iperf -s -p 1024 -u

iperf -c rem.servers.kronis.eu -p 1024 -u

iperf -s -p 32768

iperf -c rem.servers.kronis.eu -p 32768

iperf -s -p 32768 -u

iperf -c rem.servers.kronis.eu -p 32768 -uIf it works, both the tests with those and any other ports should succeed:

Furthermore, it should also be possible to connect to the proxy server through SSH with the previously set port. To persist these rules, we'll need some additional software. This should be only done AFTER we're sure that everything works, after which we'd like the created rules to be persisted after restarts:

sudo apt install iptables-persistentThen, all that's left is to restart the servers and make sure that everything still works after restart and that the services start automatically:

sudo reboot nowThere you have it! Enjoy your DMZ and working around your ISPs limitations with essentially minimal expenses!

Why to NOT use Debian (for now)

Of course, things might not be quite as easy in some setups, since WireGuard needs kernel modules to be loaded. While this could result in better overall performance of the software, loading kernel modules sometimes is a hellish undertaking because nothing likes to work as one would expect.

If it fails and you get something like the following:

[#] ip link add wg0 type wireguard

RTNETLINK answers: Operation not supported

Unable to access interface: Protocol not supportedIn that case the first thing to do would probably be to attempt restarting the device, if you just installed WireGuard. However, if the problem persists, you probably don't have the kernel module installed or enabled:

sudo modprobe wireguardIf you get the following error:

modprobe: FATAL: Module wireguard not found in directory /lib/modules/4.19.0-6-amd64That means that you'll most likely need to mess around with DKMS to get everything working correctly:

sudo apt update && apt install -y linux-headers-`uname -r`

sudo dkms status

sudo dkms build wireguard/1.0.20210124

sudo dkms install wireguard/1.0.20210124

sudo modprobe wireguardIf you get an error message like the following:

E: Unable to locate package linux-headers-4.19.0-6-amd64

E: Couldn't find any package by glob 'linux-headers-4.19.0-6-amd64'

E: Couldn't find any package by regex 'linux-headers-4.19.0-6-amd64'That probably means that you'll need to choose which headers to install manually, the previous major version and the closest minor release being your best bet:

sudo apt-cache search linux-headersOf course, in that case you might get problems with DKMS which will ask you to install non-existant package:

Error! Your kernel headers for kernel 4.19.0-6-amd64 cannot be found.

Please install the linux-headers-4.19.0-6-amd64 package,

or use the --kernelsourcedir option to tell DKMS where it's locatedSince Debian has failed at providing us for the kernel headers for the version that is actually running, we'll need to sort of hack around this and point it to the folder directly. First, let's find where the kernels are located:

sudo dpkg -L linux-headers-4.19.0-13-amd64After that, you should be able to force DKMS to use the directory for kernel sources, even though the major versions of the installed kernel headers and the actual running kernel differ:

sudo apt install -y linux-headers-4.19.0-13-amd64

sudo dkms status

sudo dkms build --kernelsourcedir /usr/src/linux-headers-4.19.0-13-amd64/ wireguard/1.0.20210124

sudo dkms install wireguard/1.0.20210124

sudo modprobe wireguardOf course, that's still likely to fail, because kernels are awfully finnicky like that:

modprobe: ERROR: could not insert 'wireguard': Exec format errorIt seems like what's actually required here is to install a different, unstable kernel version that's actually supported by WireGuard. Or, alternatively, one could just use a less stable distro that's likely to have a more recent version of the kernel and maybe even official support for WireGuard, like Ubuntu. Isn't it a bit awkward to just give up mid way through the install process and start again with a different OS? Sure, but then again, noone really has the time or energy to repeatedly bang their head against the wall of GNU/Linux being a broken OS more often than it is not, therefore this choice makes perfect sense, since i just want things to work, not to have them work on a particular distro:

Most of the config on Ubuntu is actually basically the same, except that you no longer need to use Debian's unstable repos:

sudo sh -c "echo 'deb http://deb.debian.org/debian/ unstable main' >> /etc/apt/sources.list.d/unstable.list"

sudo sh -c "printf 'Package: *\nPin: release a=unstable\nPin-Priority: 90\n' >> /etc/apt/preferences.d/limit-unstable"Debian will probably get there once the software is tested and will be incorporated in the stable branch with the support for the mainline kernel, but until then Ubuntu or other distros will simply have to work in its place.

Also, as for saving the iptables rules, some sources recommend using this package:

sudo apt install netfilter-persistent

sudo netfilter-persistent save

sudo systemctl enable netfilter-persistentBut personally i found it to be broken on both Debian and Ubuntu, since it said that it's saved changes, but nothing was kept after restart.

Summary

In the best traditions of GNU/Linux, you can definitely get what you need working, if you don't value your own time. I spent the entirety of my Sunday setting all of this up, mostly because Debian kept breaking in a variety of ways and even when i used Ubuntu, some packages (like "netfilter-persistent") simply didn't do their job.

Furthermore, for some odd reason, restarts sometimes broke the entire setup, even if the configuration worked before the restart and the services were enabled, pinging the server didn't work after it:

In short, pray that you won't need to use Linux for networking that often, because it sometimes is pretty broken and doesn't really help you achieve the results that you desire:

For some reason, a reinstall of the VPS and reconfiguring everything like the above a few times did seem to help with it and eventually it started working. Yet, the UX and DX should both get a bit more attention and debugging these issues is a tad weird, especially if you're not a networking expert (which i am not) - my master's degree didn't really prepare me for using iptables efficiently and it's not like there are that many cookbooks available online for how to get things done. Therefore, i need to do lots of experimentation to get things right.

However, with all of that said, WireGuard still seems like a step in the right direction, because it's not the Eldritch horror that OpenVPN was.

Update

It seems like my HDD died the next morning after this was written, apparently there being too much vibration in the car while i was transporting it home from the dorms. Seems like i'll have yet another thing to worry about. Will just have to pull out an install of Clonezilla and see whether i can do anything with it.

Other posts: « Next Previous »