On Anthropic

Date:

It's been a while since I last looked over the pond, at what the Americans are doing. The problem is that they just keep doing more stuff, to the point where if I were to write about them, it would be a long post and it becomes less and less possible to hide behind sarcasm and not condemn that whole administration.

At the same time, in absolute terms I am nobody so that gives me some safety, but as a European, it's also hard to hide my disgust at blatant disregard for human rights, human life and our European values - especially when there's foreign interference that aims to meddle with the EU, NATO and also support far right, and borderline fascist, parties like AfD.

A measured take on AI and safety

Today, however, I'm not doing a long political post, rather I'd like to just reference this one post that Anthropic recently made: Statement from Dario Amodei on our discussions with the Department of War

In the post, they do a little patriotic blurb about wanting to help Americans and keep them safe, which I don't take an issue with. Even though I'd say that the administration is trampling over the legacy of what was once a democratic ally, I doubt anyone would take an issue with wanting to keep your people safe - I don't have a problem with Americans in general, the same way I have some friends in Israel while holding similar disdain for the actions of that state.

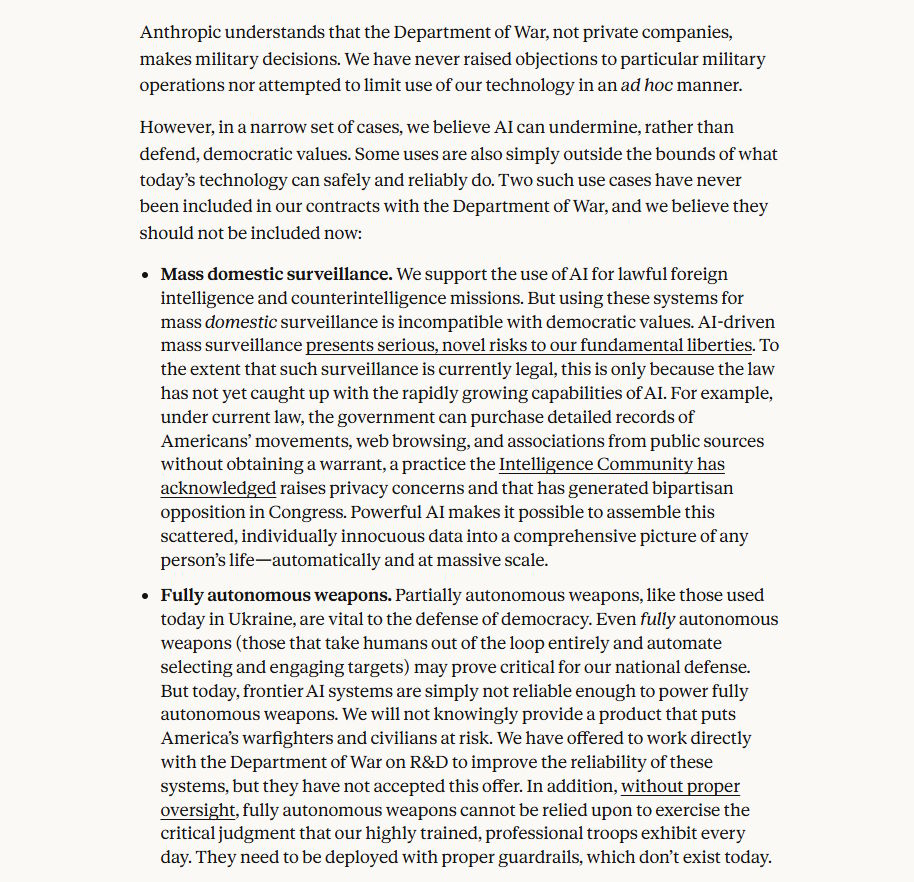

Anthropic then proceeds to reiterate that they don't have "ad hoc" limitations on the use of their models, but that they take an issue with two particular use cases, where it is impossible to use the technology responsibly:

They do share a considerable amount of detail, but I'd say that their assessment is correct. With the current state of AI, using it for either mass surveillance or fully autonomous weapons systems would be deeply problematic and in quite a few cases, also severely illegal - though it's not that it has stopped the current administration before.

No matter how you look at it, their response is fairly measured and in any other governmental system, that should be met with nothing other than agreement from the powers that be, while a lot of other use cases could be pursued, money exchanged, and Americans kept safe. However, that was not the response that they got. Almost immediately, there was backlash from the government, which is ridiculous - since the only things they could have opposition on is that they do, as a matter of fact, actually want to use AI for those very unsafe and illegal use cases.

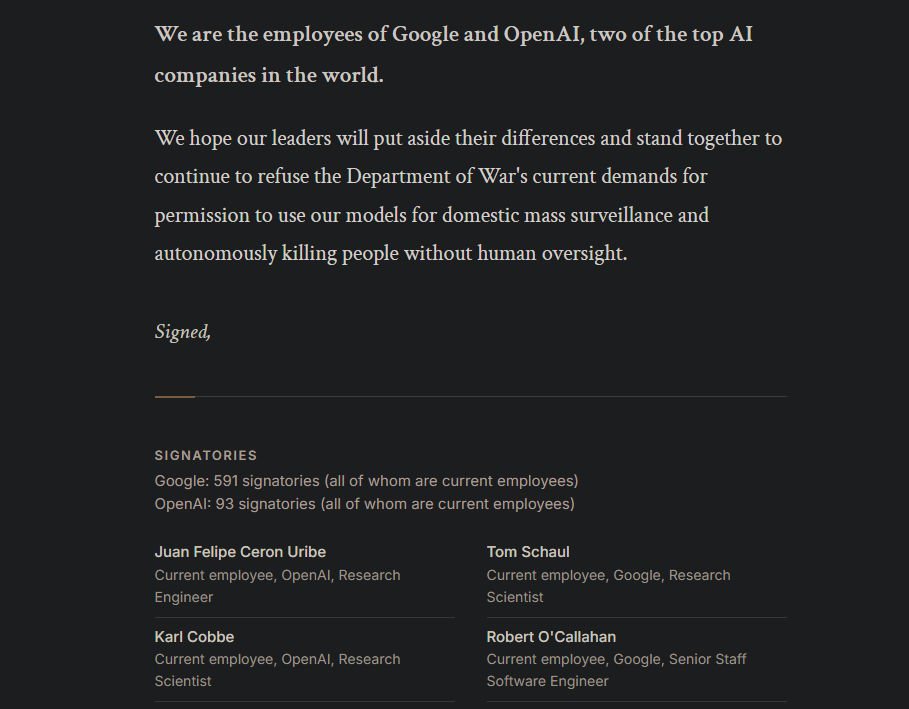

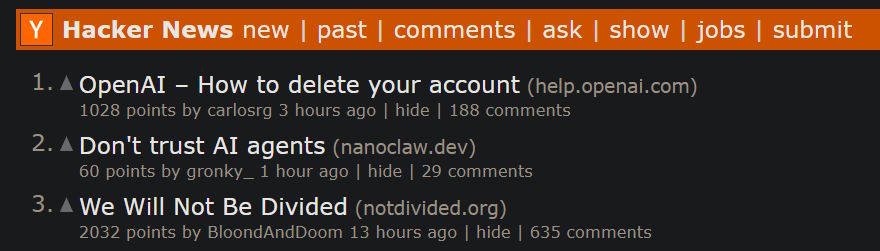

Luckily, a lot of people from both OpenAI and Google got together and signed an open letter in protest of that: We Will Not Be Divided

Here's a snapshot:

Again, the position of not letting technologies with no fundamental possibility to take responsibility over its actions kill people feels like the bare minimum. How did that old warning from IBM in the late 70s go?

A computer can never be held accountable, therefore a computer must never make a management decision.

Obviously, that was talking about management - things like possibly wrongfully terminating someone from their work position, we're talking about something way more severe than that. So surely the people in power understand something that was known decades ago, right?

An unhinged take on AI and safety

Right?

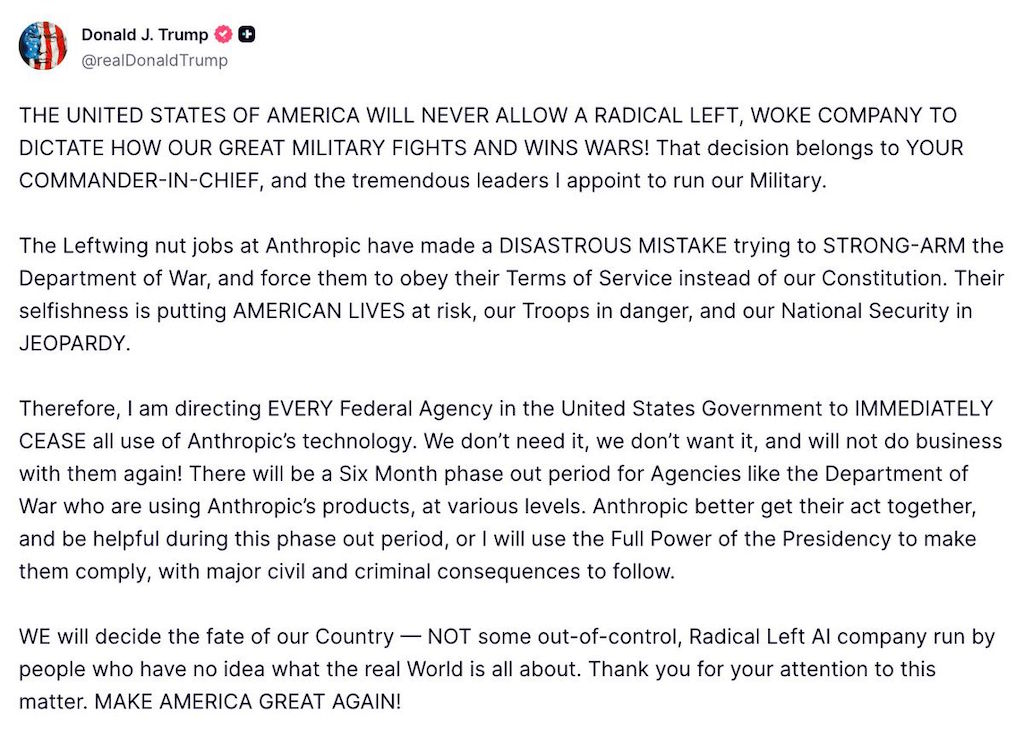

What a bunch of drivel.

It's as disgusting, as it is embarrassing:

- claiming that anything you don't like is "radical left" or "woke", exaggerating for the sake of having an easy out to hate it

- claiming that taking an issue with illegal mass surveillance and autonomously killing people is somehow "strong arming"

- claiming that those are somehow codified in the US constitution

- claiming that not wanting to do those things is somehow selfish or endangers your people

- openly saying that you will use and abuse whatever power you have to strong arm them instead, into allowing both illegal and ethically bankrupt actions

- openly threatening to prosecute them for having a shred of human decency

I'm happy I cut the few Trump supporters out of my life, since you have to be either delusional or plain evil to support someone like that.

I think it's useless to point out individual lies when everything that is said is lies. The new status quo seems to be a gish gallop of bullshit and attempts to shift the overton window so far right that explaining why mass surveillance, letting drones autonomously kill people or, quite possibly, eventually repressing anyone who wants to stand up against all that somehow puts the burden of proof on you - as if all of those are perfectly normal and patriotic.

That's exactly the kind of thing that people who want to see the US turn into Russia 2.0 would say and strive for. The lack of humanity and basic decency on display is apalling.

Corporate profit seeking and capitalism

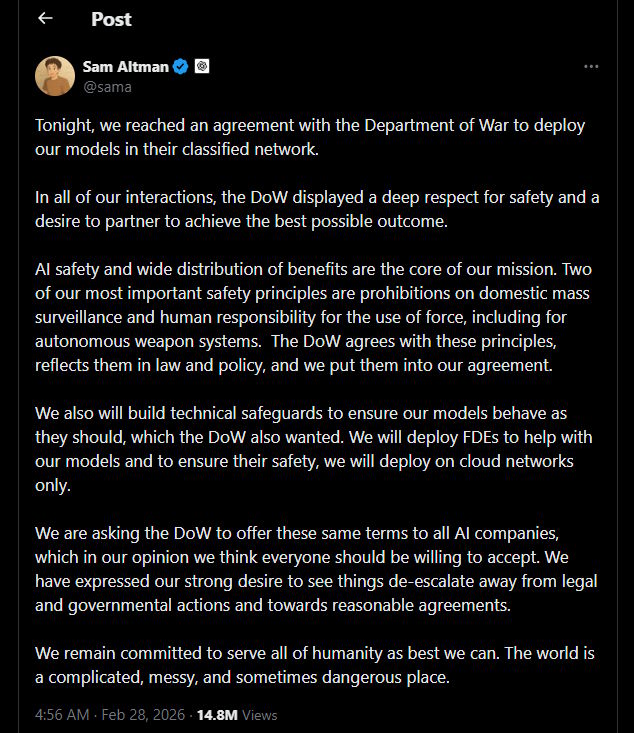

Unfortunately, because of the clown world that we live in, not long after, OpenAI filled in that spot, in search of a nice big fat chunk of money to let them sleep at night no matter what their systems will do. Read it from the source: Sam Altman on Twitter

Maybe that's why a page where you can delete your OpenAI account is on the top of HN right now (and they're predictably faking deletion errors to prevent at least some people from successfuly leaving them):

At the same time, you have people who are in the comments explaining how they're employees on HN and don't see this as a reason to leave their employment there. It will probably be increasingly saddening to see how the voices of the employees mean nothing, but also how some of those will have been just performative - because I don't expect everyone on that open letter signatories list to have resigned by the end of the week and having a cushy position at Anthropic or Google.

The thing is, that I can't even easily blame the employees at those companies. When push comes to shove, they are as dependent on money to make a living as anyone else - and uprooting your own life over principle, especially when many others won't do the same, is hard to ask of anyone. For example, if I was also making a 6 or even 7 figure income, I'd also find it similarly hard to take action like that if I didn't know what the future would hold, even if other large orgs would be more than happy to throw me a whole bunch of cash and comfort if I helped them instead of the previous org. It's just unfortunate to see that, as it is others applauding such actions.

Just go with Anthropic

Personally, I voted with my wallet and now have an Anthropic Max subscription - with it, in the past few weekends I've done more than some other people do during an entire week or more of work. I can work on 4 projects in parallel and, when coupled with proper safeguards such as prebuild scripts to enforce the architecture and conventions that I want, linters and code tests, it's a real force multiplier in both software development and elsewhere. It's actually so good and they give me so much usage, that I can just throw Opus 4.6 at all problems and having a powerful model always available has eliminated me needing to swap between various different ones.

I also benefit greatly from Claude Code - since it seems to stick to the working directory a lot better than even the same models running on AWS Bedrock in the EU when coupled with OpenCode, in addition to having pretty great support for sub-agents and parallel tool calling. They also have a desktop version and while I don't enjoy it being written in Electron and sometimes being sluggish, it's great from an observability point of view - I can view a list of all the parallel projects being worked on, tool calls and permissions, and manage it all way better than I could with just a parallel terminal windows.

Even their Claude Cowork seems promising and I quite like the idea of having a nice UI for agentic work like that (even though Claude Code achieves a lot of the same), albeit the limitation of it only being able to work on my OS drive and home folder feels a bit arbitrary. I am not actually quite sure whether direct computer use will ever quite pan out due to how much we rely on GUI software for all sorts of things, which makes me think that the world should embrace CLI tools that GUI tool just sit in the front of for convenience a bit more - structuring our apps more like the MVC pattern, with the GUI solutions being just the view/controller component in some regards, but I digress.

I will say that the end goal for me there would be to have something similar to Jenkins (just an example, not actual Jenkins) or Woodpecker CI, where I could install an orchestrator of some sort on a server and let it take care of directing my homelab servers and personal PC to work on tasks, and occasionally check in on that with my phone or laptop when I'm on the move. That would make it as close to perfect as possible, alongside good permissions settings and sandboxing (e.g. commands that are verboten and running the agents in Docker containers, similar to Woodpecker CI runs). Either way, people already seem to be working on Conductor and numerous similar projects, as well as OpenHands, so it's probably just a matter of time until I get what I want.

I will admit that Anthropic's subscription replaced pretty much all others for me, since I really don't need to spend around 200 EUR per month on these technologies, after finding one that is good enough. Perhaps that's also largely why they're trying so hard to be loss leaders in the industry and secure as big of a userbase as possible - because now Cerebras Code doesn't get any of my money, even if I otherwise found their products and performance to be great, because now I have one that, even if slower, covers all of my use cases well enough.

Summary

In an ideal world, we'd see "Anthropic EU" emerge, with strategic funding from the greater EU and partnerships with Mistral, to push EU towards AI innovation and give the company a safe area to operate in, which better aligns with its values and the greater European culture, a safe haven during this tumultuous time in the US. That could provide a similar push towards innovation and improvements, as the first emergence of DeepSeek from the East did towards the then stagnating Western models and technologies.

Sadly, there are complexities there that I believe wouldn't be easy enough to navigate and make the whole idea unfeasible - even if I like to daydream about a world where the EU leadership is competent, quickly sets aside 50-100B EUR (I would gladly have my tax money going towards this) and gets an offshoot off of the main company going here with expedited visas and residency permits and housing whoever needs them, with the EU being the owner of this newfound company, alongside pushing for partnerships and merging with Mistral, making EU be a considerable force when it comes to this technological innovation and implementation, but one that cares about human rights and safety.

It would take the equivalent of one year's GDP of the Baltic countries to dominate AI in the world. That's a no brainer. Like, I'm going to be somewhat poor all my life anyway, we might as well take the output of my work and use it for something meaningful.

If there's one thing we should take from the Americans, then it's their entrepreneurial spirit and the risk taking behavior - building our own sovereign tech instead of just giving our hard earned money away to a bunch of corpos and consultants and contractors with no long term ownership over everything. It's the same how a lot of the digital infrastructure lives under the shoe of Microsoft and AWS, instead of embracing Linux (and contributing back to the community) and supporting regional businesses such as Hetzner. It's like they think that reducing the history of the European nations down to a slow decline in the name of safety and predictability is somehow better than risking it all to prosper and not taking a shot at greatness, sovereignty and success.

Why can't we live in a world like that? Then again, in such a world, the help towards Ukraine would also look way different, as would the stances on numerous other issues - and that might take tightening our belts (not that RAM prices and rampant capitalism don't do that already, we really should produce our own tech), alongside the populace being both principled, ethical and educated. I want to live in that sort of a world.

It's not even that elements of nationalism are inherently bad, but rather that those other people are blatantly corrupt and more often than not plain evil. I hope Americans wake up and vote them out, or put the ones who have committed crimes in prison. Sadly, I won't be holding my breath on that, it seems like the rule of law and increasingly, common sense, both seem to be dead or dying. What a world to live in.

Other posts: « Next Previous »